GALLIUM NITRIDE AI is nothing without power: why GaN is becoming the backbone of the intelligence era

Related Vendors

Artificial Intelligence is changing everything, from hyperscale data centers to edge robotics and systems that work on their own. But there is one basic limit behind every neural network inference and training cycle: how efficiently power is delivered. The fast growth of AI workloads is not just a problem with computers; it is also becoming a problem with power electronics.

In this situation, gallium nitride (GaN) power devices are becoming an important technology that makes things possible. Silicon MOSFETs have been the most common way to convert power for decades, but the move toward higher switching frequencies, higher power density, and tighter efficiency budgets is speeding up the use of GaN. In a lot of new AI power architectures, GaN is not just an improvement; it's becoming a must-have.

The real bottleneck of AI: power delivery

Modern AI infrastructure is scaling at unprecedented speed. Training clusters now operate at rack densities exceeding tens of kilowatts, and future AI platforms are moving toward even higher values. The challenge is not only supplying energy, but delivering it efficiently and locally across increasingly distributed power architectures.

Traditional silicon MOSFET technology has served the industry well for more than forty years, especially in low-voltage switching applications such as DC-DC converters and motor control systems. However, the efficiency limits imposed by silicon’s material properties are becoming increasingly evident as switching frequencies increase and power density requirements rise.

This is where GaN enters the picture.

:quality(80)/p7i.vogel.de/wcms/d8/18/d8181873ad07a9b0f77c8ef2d82441cb/0129407783v2.jpeg)

GaN vs. MOSFET

Evaluating GaN as a low-voltage alternative to Silicon MOSFETs

Why GaN changes the rules of power conversion

GaN is a wide-bandgap semiconductor with intrinsic material advantages over silicon. These include higher breakdown field strength, faster switching capability, and lower parasitic capacitances. The result is a technology platform capable of operating at higher frequencies with significantly lower switching losses.

In practice, this translates into three system-level benefits:

- higher efficiency

- smaller magnetics and passives

- increased power density

These are precisely the parameters limiting next-generation AI infrastructure.

GaN enables faster switching transitions and lower losses than silicon devices operating under similar conditions. As switching frequency increases, passive components shrink, allowing designers to rethink the entire architecture of power delivery networks.

In AI servers, where board space is limited and thermal budgets are tight, these advantages are decisive.

Hyperscale computing has moved to distributed intermediate bus converters and point-of-load regulators that are closer to processors and accelerators.

This change in architecture is good for GaN devices.

Low-voltage GaN transistors are already making big strides in the sub-200 V market, which makes up about three-quarters of the total MOSFET market. This includes things like robotics platforms, AI servers, and autonomous systems, where making things more efficient leads to better performance and lower cooling costs.

GaN's ability to work well at high switching frequencies makes it possible to make small voltage regulator modules that can be placed closer to GPUs and AI accelerators. This cuts down on distribution losses and speeds up transient response, which are both very important for AI workloads that have quickly changing current needs.

GaN and the hyperscale AI revolution

The rise of generative AI has triggered a massive increase in energy consumption across hyperscale data centers. In many facilities, rack power density already exceeds 30 kW and are moving to 100 kW this year.

To support these requirements, next-generation rack-level architectures are transitioning toward higher intermediate bus voltages and distributed conversion stages. GaN devices are particularly well suited to these environments because they combine high switching speed with high efficiency across a wide operating range.

In fact, GaN technology is already being deployed in advanced 800 V AI power architectures supporting next-generation accelerator platforms.

:quality(80)/p7i.vogel.de/wcms/b9/26/b9265548cf4e6fe7e45789b711d01f8f/0130098748v2.jpeg)

GAN VS. MOSFET

How GaN is killing the MOSFET

Power density: the hidden metric behind AI scaling

Compute performance is often measured in FLOPS per watt. But infrastructure scalability depends just as strongly on watts per cubic centimeter.

GaN enables higher power density compared to silicon-based solutions because its wide bandgap allows operation at higher switching frequency with reduced losses and a smaller footprint.

For AI systems, higher power density translates into:

- smaller voltage regulators

- shorter current paths

- lower thermal stress

- improved system reliability

This is especially important in edge AI platforms, autonomous machines, and robotics, where space and cooling capacity are limited.

Efficiency is the new performance metric

As AI continues to scale globally, electricity consumption is becoming a critical constraint.

Data centers are already among the fastest-growing electricity consumers worldwide. The rapid expansion of large-scale training infrastructure and inference services is accelerating this trend even further.

The International Energy Agency (IEA) says that by 2030, the amount of electricity needed by AI-related data centers will more than double, reaching almost 945 TWh worldwide. This is about the same amount of electricity that a mid-sized industrialized country uses now. Hyperscale facilities already have more than 100 MW of power per site, moving to 1 GW at some of the sites under construction. AI is also becoming a tool to make energy systems work better, make the grid more efficient, and speed up electrification. This dual role makes power conversion efficiency a strategic enabler: without major advances in power electronics - especially high-efficiency GaN architectures - the long-term scalability of AI infrastructure will face increasing energy and thermal constraints.

In this context, even small improvements in conversion efficiency translate into enormous system-level savings.

GaN is therefore becoming central to sustainable AI scaling strategies.

:quality(80)/p7i.vogel.de/wcms/4e/f8/4ef8fb932448610eba4dd3677883262f/0130246966v2.jpeg)

CIRCUIT PROTECTION

Menlo Micro advances MEMS power switching for high-power circuit breakers

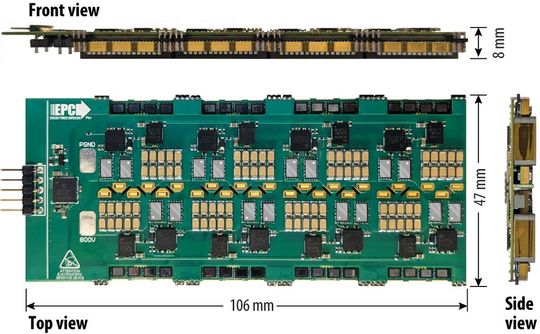

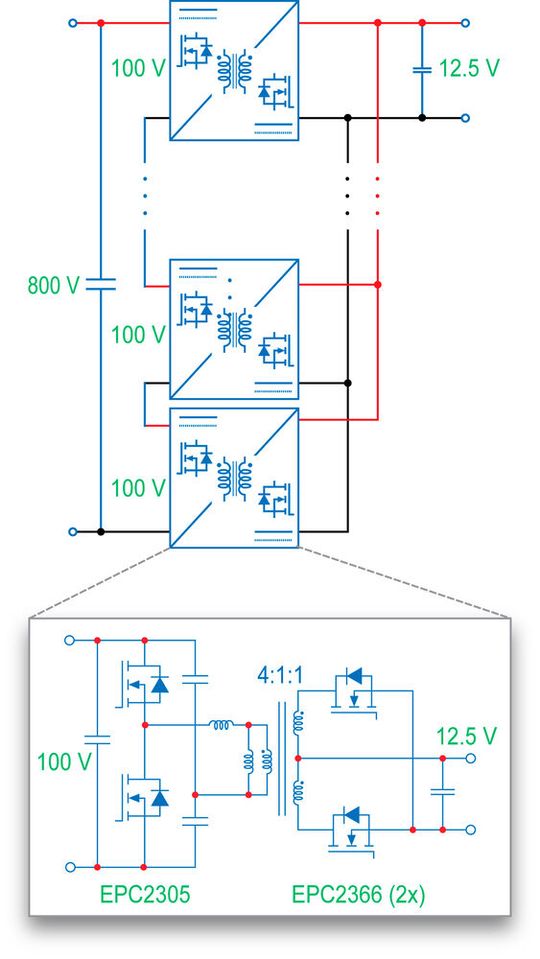

Isolated DC-DC conversion of a high-density 800 V bus using cascaded GaN modules for server applications

As hyperscale and AI servers move toward 800 V DC distribution architectures, the need for small, high-efficiency isolated intermediate bus converters is growing. A recent GaN-based solution shows how cascade input / series-input parallel-output (ISOP) architectures make it possible to convert from 800 V (±400 V) to low-voltage rails like 12 V and 50 V with high density and scalability. This can be done with MHz-class switching and multi-kilowatt performance.

The converter uses a primary half-bridge and a secondary push-pull topology to keep the number of devices to a minimum while still being efficient and cost-effective across an eight-module architecture. Each module puts out about 750 W, and when they are all combined, they put out 6 kW at a fixed conversion rate of 64:1. The system runs at almost 1 MHz and takes up 5000 mm² of space and is 8 mm thick. It can support cooling on one side and stronger isolation.

One of the best things about the modular ISOP structure is phase interleaving, which cuts down on ripple current and output capacitance needs by a lot. For instance, a four-phase interleaved configuration lowers ripple current to 36 A, which allows for a capacitance reduction of almost 18 times. This is compared to a single-phase implementation that needs about 390 µF to keep the ripple at this power level at 130 mV. This directly improves how quickly things respond to changes, which is very important for dynamic server workloads.

The distribution of modules also helps with thermal performance. Instead of putting all the losses in high-voltage devices, the architecture uses low-voltage GaN FETs with lower effective RDS(on) to make conduction more efficient and cooling easier. The design of transformers is also made easier, and EMI performance is improved by phase interleaving.

The design uses EPC2305 (150 V GaN) devices on the primary side and EPC2366 (840 µΩ GaN) devices on the secondary side. Both are in small QFN packages. The resonant converter structure has a large magnetizing-to-resonant inductance ratio that separates poles and zeros on purpose to keep gain stable when the load changes. Because of this, switch-node waveforms stay almost the same from no-load to full-load operation.

The measured efficiency of the system is more than 98% at its peak and stays around 96.5% at 500 A output, which includes housekeeping power. Open-loop voltage sharing across cascaded modules keeps regulation at full load within ±0.3%, which shows strong intrinsic balancing without the need for complicated closed-loop control.

By combining magnetic structures, a companion 800 V-to-50 V architecture was able to get even more power density improvements. Using flux cancellation between two transformers cuts the core volume in half and lets it handle 11–12 kW at the same switching frequency. The power of each module goes up to 2.75 kW, which makes it possible to easily scale across server distribution rails with voltages of ±400 V and 800 V.

These results show that MHz GaN resonant ISOP architectures are becoming a practical base for delivering power to high-voltage data centers of the future.

:quality(80)/p7i.vogel.de/wcms/cb/aa/cbaa3bbaed9f2eed05ed6747db579c09/0130182472v2.jpeg)

POWER INNOVATION

PCIM Expo & Conference 2026 puts AI in the spotlight

AI needs better transistors - not just better algorithms

As AI accelerators continue to increase current demand and switching dynamics become more aggressive, traditional silicon MOSFET architectures face fundamental physical limitations. GaN devices, by contrast, offer a path toward maintaining efficiency while increasing switching speed and reducing footprint.

In other words, GaN is not replacing MOSFETs everywhere - but it is replacing them where performance scaling matters most.Artificial intelligence is often described as a software revolution powered by advanced processors. In reality, it is equally a hardware revolution driven by improvements in power conversion efficiency.

Without efficient power delivery:

- GPUs cannot scale

- edge inference cannot miniaturize

- robotics cannot become autonomous

- hyperscale infrastructure cannot remain sustainable

GaN technology addresses these challenges directly by enabling faster switching, higher efficiency, and higher power density than legacy silicon solutions.

As AI systems continue to evolve, the question is no longer whether GaN will replace MOSFETs in selected applications.

The question is how quickly power architectures can transition to fully exploit what GaN makes possible.

Because in the era of artificial intelligence, compute may define capability - but power defines scalability.

PCIM Expo 2026: Secure your ticket now

Looking for the latest power electronics solutions? At the PCIM Expo, you will find reliable technologies that will equip you for the future. Learn all about new components, sustainable systems, and more. Benefit from the Early Bird Rate until 30 April 2026. Secure your ticket now!

References

- Using low-voltage GaN in ISOP Converters for AI Servers with 800 V Architecture, Bodo’s Power Systems March 2026, Alejandro Pozo and Michael De Rooij

- High-Voltage GaN Isolated Converters for Server Power Systems, APEC 2026, Michael De Rooij

(ID:50811738)

:quality(80)/p7i.vogel.de/wcms/12/4c/124cb8ed093d9694f8d627f94f1f6254/0130586193v2.jpeg)

:quality(80)/p7i.vogel.de/wcms/b9/32/b932b48e411d9f6c41a59522880b1e2c/0130564051v2.jpeg)

:quality(80)/p7i.vogel.de/wcms/b2/96/b296e5c2062f79566ea08574774c6cf4/0130561033v2.jpeg)

:quality(80)/p7i.vogel.de/wcms/1e/d0/1ed0d794c7c01906cdf1857db64b65de/0130401429v2.jpeg)

:quality(80)/p7i.vogel.de/wcms/de/9b/de9beb17c1467fdb18301a114f31bf8e/0130651681v2.jpeg)

:quality(80)/p7i.vogel.de/wcms/47/47/474773560a05ff45872e5942c2702e11/0130617850v2.jpeg)

:quality(80)/p7i.vogel.de/wcms/70/00/7000469e10c35ed7751729db836151d0/0130184622v2.jpeg)

:quality(80)/p7i.vogel.de/wcms/cb/aa/cbaa3bbaed9f2eed05ed6747db579c09/0130182472v2.jpeg)

:quality(80)/p7i.vogel.de/wcms/62/2b/622bf47577784ebef86a297bed38489e/0130583533v2.jpeg)

:quality(80)/p7i.vogel.de/wcms/0c/bf/0cbf7b312e3c0681d7d3ad25110f244a/0130649548v2.jpeg)

:quality(80)/p7i.vogel.de/wcms/e6/49/e6495c2d66253b8a488f774bed717d0b/0130452223v2.jpeg)

:quality(80)/p7i.vogel.de/wcms/94/c0/94c0221bedb88a883b9d451560a8dd95/0130559763v2.jpeg)

:quality(80)/p7i.vogel.de/wcms/34/4a/344a3dc5a19429cf6726b7f8f7e2ee32/0130109719v2.jpeg)

:quality(80)/p7i.vogel.de/wcms/be/c8/bec8d43fc0ee73414274be44608b2970/0129748903v2.jpeg)

:quality(80)/p7i.vogel.de/wcms/23/ee/23ee4a97790d6009dbfd7d9577ffa723/0129220424v2.jpeg)

:quality(80)/p7i.vogel.de/wcms/3c/d1/3cd1cacbceb792ba63727199c61ca434/0127801860v2.jpeg)

:quality(80)/thumbor.vogel.de/kNr3yG3hu6lnpJYlKwKfoOwkLA4=/500x500/p7i.vogel.de/wcms/69/79/6979e4b5bc84c/maurizio-dpe.jpeg)

:fill(fff,0)/p7i.vogel.de/companies/5f/71/5f71d5f92a5f6/2000px-rogers-corporation-logo-svg.png)

:fill(fff,0)/p7i.vogel.de/companies/68/2c/682c3e2e9a195/logotype-rvb.jpeg)

:fill(fff,0)/p7i.vogel.de/companies/68/08/6808a2b3b6595/het-logo.jpeg)

:quality(80)/p7i.vogel.de/wcms/b9/26/b9265548cf4e6fe7e45789b711d01f8f/0130098748v2.jpeg)

:quality(80)/p7i.vogel.de/wcms/d8/18/d8181873ad07a9b0f77c8ef2d82441cb/0129407783v2.jpeg)